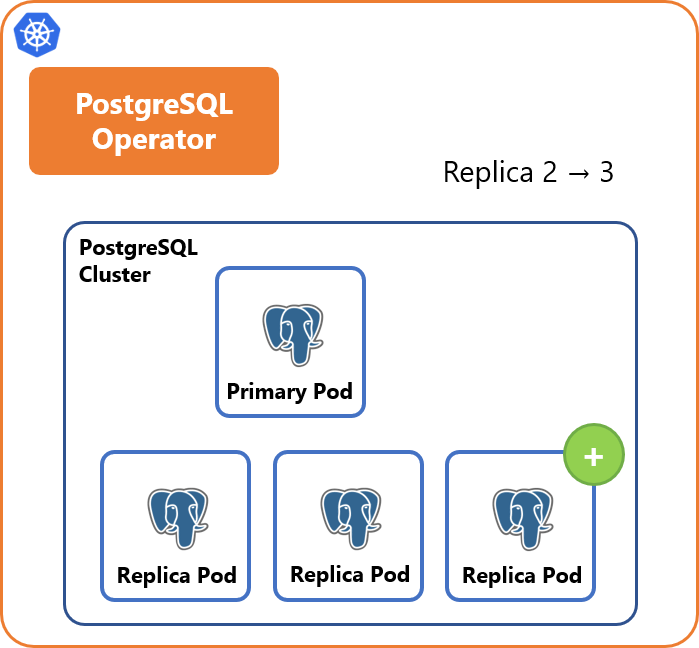

If the attempt is unsuccessful, the operator begins to deploy a new PostgreSQL instance by copying data from the primary. It checks whether there is a PVC with PGDATA on the node for each new Pod and tries to use it by applying the missing WAL. The way the operator uses local storage on the K8s nodes deserves special mention.

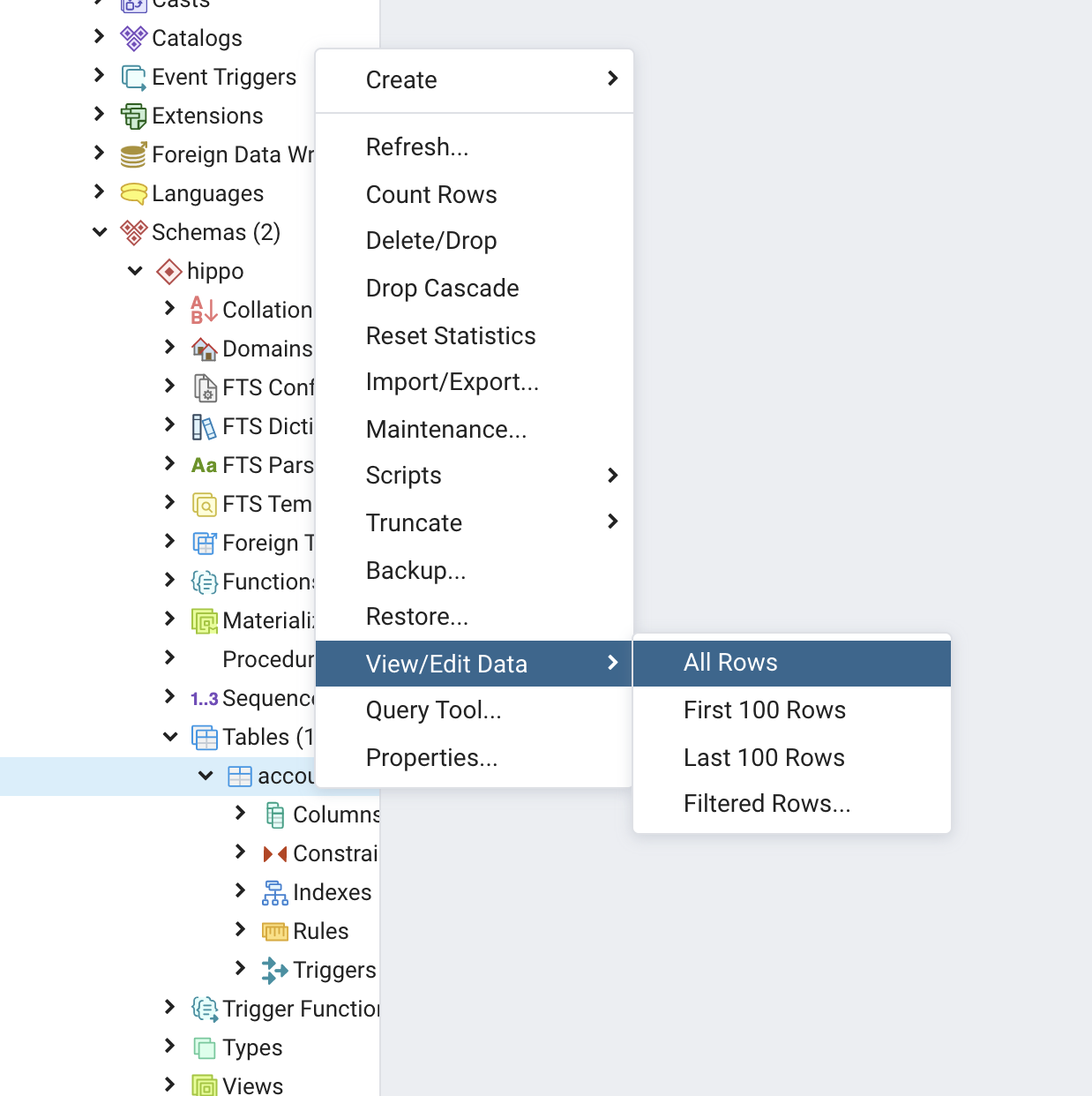

minSyncReplicas and maxSyncReplicas control the number of synchronous replicas. The operator supports asynchronous (default) and synchronous (quorum-based) streaming replication. To do so, increase the number of replicas in the cnpg-controller-manager Deployment. For that reason, we recommend running multiple operator Pods to keep the cluster running smoothly. If there are any unexpected errors on the primary, the operator promotes the instance with minimal replication delay to the primary.Ĭuriously, the operator creates Pods with database instances instead of ReplicaSets or StatefulSets. After restarting, the data is synchronized with the master, and the instance is activated, provided that all checks were successful. If the liveness probe fails for some secondary PostgreSQL instance, the latter is marked broken, disconnected from -ro and -r services, and restarted. Instead, each Pod gets its own Instance Manager (available at /controller/manager) that directly interacts with the Kubernetes API. This operator’s signature feature is avoiding external failover management tools, such as Patroni or Stolon (we reviewed it here). Architecture, replication, and fault tolerance You can manually create additional databases and users as a postgres superuser or run the queries specified in the section after initializing the cluster. This approach has many advantages refer to Frequently Asked Questions (FAQ) to learn more about them. Thus, when creating a cluster via the initdb method, you can specify just one database in the manifest. The operator authors recommend creating a dedicated PostgreSQL cluster for each database. Kind: Secret # Secret with the database credentials Here is a sample manifest defining a grafana-pg cluster of 3 instances with local data storage:. This option can be helpful in migrating the existing database. copying data from the existing PostgreSQL database ( pg_basebackup).Note that point-in-time recovery (PITR) is also supported restoring it from a backup ( recovery).

The spec.bootstrap section of the Cluster resource lists the available cluster deployment options: In addition to the official PostgreSQL Docker images, you can use custom images that meet the conditions listed in the Container Image Requirements. The operator works with all the supported PostgreSQL versions. Once the installation is complete, the Cluster, Pooler, Backup, and ScheduledBackup CRD objects will become available (we’ll get to them later). To install CloudNativePG in Kubernetes, download the latest YAML manifest and apply it using kubectl apply. The operator is flexible and easy to use, with a wide range of functions and detailed documentation. In late April 2022, EnterpriseDB released CloudNativePG, an Open Source PostgreSQL Apache 2.0-licensed operator for Kubernetes.

In this piece, we’ll discuss CloudNativePG along with its features and capabilities and go on to update our comparison to include the new operator. We quickly looked at them and consolidated their features in a comparison table. The last part compared the Stolon, Crunchy Data, Zalando, KubeDB, and StackGres operators. This article is a continuation of our series on PostgreSQL Kubernetes operators.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed